We’ve passed more than a year since the first free *cough* monthly *cough* Blender workshop organized by François Zajega and I. And since I put myself into taking notes during the the last one, here’s a quick report of what was discussed and achieved.

Morning time is for coffee and “show and tell”. Since François and David had both attended the #BConf for the first time, we took the opportunity to get their feedback on this major event in the Blender community. Here’s what they had to say.

With an overall positive feeling about the conference, they pinpointed the fact that it was about only one software, without any discussion about the operating system it runs on, and that it was also about finding solutions, methodology and good practices and how everyone runs Blender to achieve their goal. They also were surprised by the variety of profiles attending the event. From university researchers to 3D professionals or amateurs and even sales representatives, the main crowd although mainly revolved around education. And as with any free software conference, feeling part of a community is a great source for motivation boost.

On the downside, they were a bit deceived by the inequalities between the different panels, asking themselves if there was any screening at all. They were also a bit skeptic about the location of the event. More rooms with a focus on topics is on their wish-list.

To end this conversation, I asked if they had to remember only one presentation, which one would it be:

François chose Shitego Maeda’s “Generative Modelling Project”.

And David: Helmut Satzger’s “How to render a Blender movie on a supercomputer”

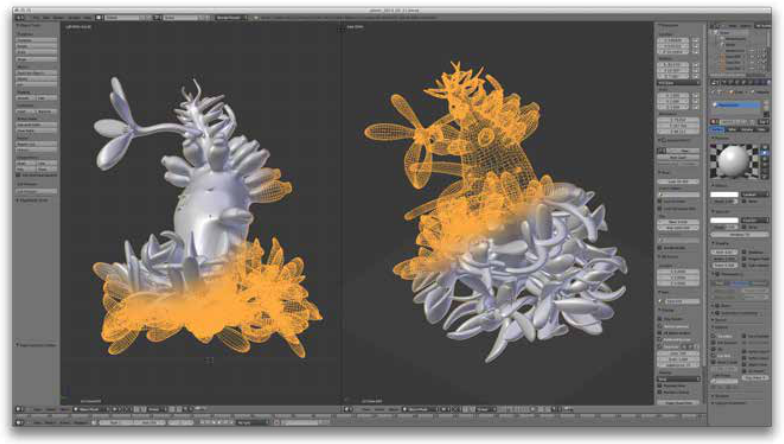

After that and for the rest of the day, we jumped into some Blender manipulation. For those who don’t know about our workshop yet, we focus on Blender from a coding/scripting perspective. This time, we wanted to explore Blender from the command-line with the goal of finding a way to extract useful elements out of a .blend file (without opening the Blender interface).

$: man blender is your friend, of course.

$: blender --background --python myscript.py is the way to start a chrome-less Blender with some custom python script filled with bpy api goodies.

With this still, it took us about a frustrating 2 hours to figure out what is clearly written at the end of the manual: “Arguments are executed in the order they are given.” Which means, you have to call your .blend file BEFORE the python script that will act upon it.

After that it was just a matter of reading the api docs to come with a ~40 lines of code script that does the extracting trick well enough to call it a successful workshop.

You can download it, test it and fill some issues if you find any on our code repository. The script will extract, in separate folders, any text, python script, image and/or mesh as .obj and .stl file from any packed .blend file you supply. Put it to good use.

If you liked this, share it and come to our next workshop. Announcement will be made on the BBUG

Comments

7 responses to “Blender-Brussels november report”

Interesting. I’ve been using blender executed from command line for my work for about a year now. It’s a great and powerful tool that can be used to automatize some 3D processess (e.g. conversion, optimization, rendering, textures-baking). As far as I know you can’t pass any arguments (e.g. path to some external file to be imported and processed) to the script which is executed after starting blender, but you can write all the important data (file paths, etc) to some file (saved in fixed-location relative to blender), which is read by the script.

Hello Lendrom. Thanks for your comment. It’s a nice idea you suggest (processing imported external files through command-line). Do you have any reference to any tests you made regarding this. I understand it doesn’t seem to be possible, but I’d like to find out how you tried ? If you have any link to your command-line Blender work, would love to see it.

Well, I have it working in a script I wrote for importing data to a webGL-based 3D geoportal. In short, I use python (with ArcGIS’ arcpy module) to load kmz (collada + kml) building models into PostGreSQL database (I need it there for some testing of spatial relations between the kmz and other spatial data), and then export it back to collada (ArcGIS does a much better job importing collada than blender and it exports collada in a simple constant form, which is much easier to process automatically in blender than colladas produced e.g. in SketchUp) and then I do some stuff with the resulting collada in Blender (import collada, remove unnecessary geometry, bake consolidated textures, generate lower resolution meshes with smaller textures, generate metadata which our geoportal understands and which describe the models). Then I “do some more stuff” with the exported obj files in pure python. It’s not flawless but it works. More or less :)

The other software I considered for automatic meshes processing is MeshLab (meshlabserver), but in my opinion it is not as powerful as blender (mostly thanks to built in python in the latter).

Wow, what a pipeline :) Thanks for sharing. Whish I could see it all working in a way. Is it research project? Can you point to your webGL geoportal?

Sorry for the late reply. The geoportal can be accessed at 3d.torun.pl and requires (of course) a browser and a graphics card with webGL support, and it’s best viewed at Chrome or Opera (thanks to webp support). If your system does not support it the system falls back to a standard 2D maps geoportal. In 3D view in the lower right corner of the screen you’ve got 3 presets of combintation of visibile layers – LiDAR cloud point, symbolic 3d map, and textured 3D models. I’d be happy if you let me know what you think.

Hey. Really nice website. Impressive to see a city displayed like this. The loading of the building texture is actually fascinating. :)

Too bad I don’t understand the language and so the purpose of the website. But it’s a great piece of work. I hope we can invite you in Brussels some day to have you talk about your way of using Blender. :)

I’m glad you liked it. I added a limited support for English version (for the most part of gui). It is enabled automatically if your browser has set language set to English.

The webportal is a city GIS portal (there is also a 2D module there) for residents and tourists.

The main advantage I guess is that it is not closed (as eg. CityEngine webGL scenes), but it dynamicly gets substantial part of its data from spatial data (GIS) servers. So when you e.g. add (using some gis software) a tree (geometry and appropriate attributes) to a ‘vegetation’ table in your database that is served by gis server and configured in our application, you will instantly have the tree added to your portal as a symbolized 3D model.